AI in Mobile Apps: How to Make an App like Siri

Today, everybody knows a girl named Siri and her besties Alexa, Cortana, and Google Assistant. These artificial intelligence pals have been hanging out in our houses, cars, and phones. The voice assistants are taking the world by storm and there’s nothing we can do but join the party. The generation raised by their side, “The Siri gen”, is our future, and voice assistants are not a new thing for them, but an essential. So, the question “How to make an app like Siri” is everybody’s pain today.

Today everybody knows a girl named Siri and her besties Alexa, Cortana, and Google Assistant. These artificial intelligence pals have been hanging out in our houses, cars, and phones. The voice assistants are taking the world by storm and there’s nothing we can do but join the party. The generation raised by their side, ‘The Siri gen’, is our future, and voice assistants are not a new thing for them, but an essential. So, the question ‘How to make an app like Siri’ is everybody’s pain today.

Now, I’ll guide you into the future and explain everything you need to know about voice assistants and the ways to make one for your mobile app. Use the plan below to skip to a question of your interest:

- Why personal assistants like Siri are so popular- What Siri can do

- Siri’s business model canvas- Technologies in Mobile Assistants- How to make a voice assistant like Siri

- Junior method- Middle method- Senior method

- 5 tips for building artificial intelligent voice assistants

Let’s talk Siri

Long before Siri got acquired by Apple, it had been a third-party app created by Siri, Inc. that was available for the download on the App Store. It had basic Siri’s features we know today. The world met that Siri on October 14, 2011, when it appeared as the iPhone 4S feature.

Why personal assistants like Siri are so popular

The Siri personal AI assistant we know today is way smarter and more convenient. And let’s be honest, everybody knows that name.

Why is that?

Why is Siri so famous?

Let’s find out!

Here are a few reasons why Siri is so popular:

- Simple. Siri comes to us to say that touch interface is overly complex. It lets the device do more, so you don’t have to.

- Fast. Voice interfaces are faster than the touch ones. All you need to do is ask, and get the results. The communication by voice comes naturally to people. The thing is we do not even consider it an interface.

- Emotional attachment. Apps similar to Siri build up users emotional attachment to them. The ability to communicate with a personal assistant by voice gives people the illusion of intimacy and personal connection. It comes extremely useful when you want your customers to stay loyal to your brand.

- Fascinating. Children take this technology for granted, but for the older generation, it is a step to the future. By using a voice assistant we can feel as a character from a movie that travels to the future or to another planet. Sure, people get used to it, and in a while, it won’t seem so out of this world, but by that time, they won’t be able to imagine the life without it, it just becomes an essential part.

What Siri can do

Siri can do so many things it would take a while listing them all, so we provide you with a quick overview of Siri feature list:

- Phone and Text actions like call, text, email or FaceTime someone, also it can read your messages and sent messages you told it to write.

- Provide you with basic information about today’s weather and currency.

- Set reminders and schedule events.

- Take care of device settings like a camera (Take a picture), Wi-Fi (Turn on/off), screen (Increase the brightness), etc.

- Internet search (definitions, news, pictures, search Twitter for, etc.).

- Navigation tasks like show me the road to home, what’s the traffic like today, find directions to, etc.

- Entertainment (What basketball games are on today?, What are some movies playing near me?).

- Engage with iOS-integrated apps (Pause Apple Music, Like this song).

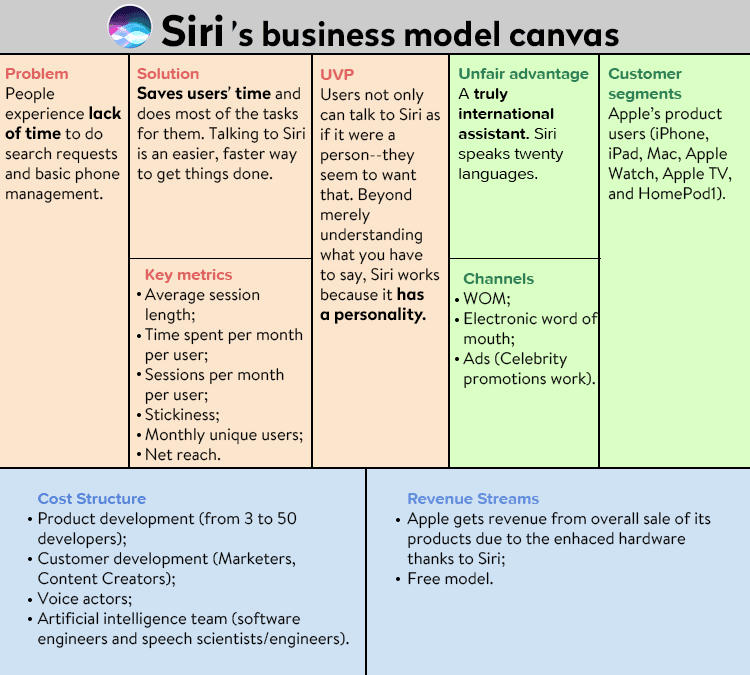

Siri’s business model canvas

Although Apple stays quiet about the specifics, the Siri business model canvas looks pretty much like this:

Technologies in Mobile Assistants

Voice assistants consist of pretty much the same set of technologies. So what gets machines running?

- Speech to text (STT) engine. The engine converts the user’s voice to text. The voice can be an audio file or a user’s speech stream.

- Text to speech (TTS) engine. Converts text to speech. That gets particularly useful when driving or cooking, so the user does not have to stop what he is doing to interact with the voice assistant. Also, it plays a big part in humanizing the assistant.

- Tagging (Intelligence). Tagging helps a voice assistant to understand what a user wants. For example, the user might ask, “Will I need an umbrella tonight?” Then the tagging engine can tag the information with weather or calendar info tag.

- Noise reduction engine. There is hardly ever a quiet and perfect environment for voice requests, there is always going to be a car moving by or a dog barking. So the noise reduction engine not only eliminates the white noise but also helps your assistant to understand you.

- Voice biometrics. It is a way of authentication, so your assistant could recognize your voice and respond only to your commands. Siri actually has it, you can teach how you say the words “Hey Siri”.

- Voice recognition. Machine learning component that drives all of the personal assistant mobile apps. This technology lets the assistant understand what you’re saying, basically it puts meaning behind your words.

- Speech compression engine. This engine comes particularly useful because it provides users with the fast output. It compresses the user’s voice so it gets sent to the server faster. You can use the G711 algorithm, that doesn’t lose the data, for this purpose.

- User interface. UI for voice assistants consists of two parts the voice and the call out. The voice part is what the user hears as a result of his question and the call outs are what he sees on the mobile screen.

How to make a voice assistant like Siri

Voice assistants can be a stand-alone application or can be included in a mobile app. So, how to make Siri? How to make your own artificial intelligence?

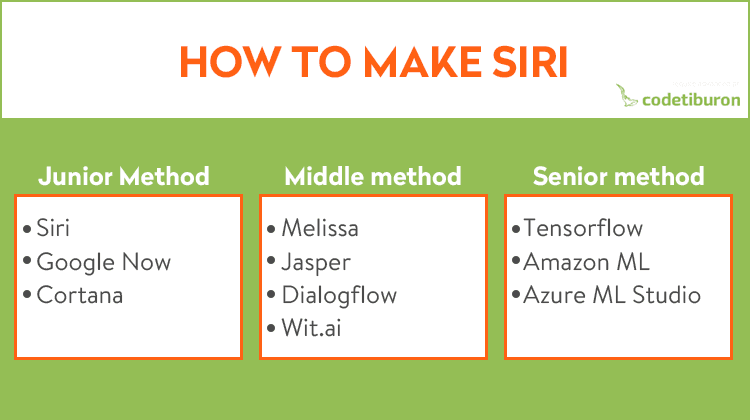

To create a Siri-like app and voice assistant, you can use three methods, for better understanding let’s call them junior, middle and senior.

- Junior method. This method is considered an easy one. You can integrate voice assistant technology into your mobile app with the help of certain APIs and special AI app development tools.

- Middle method. The second method is a bit trickier. This way, you create a voice assistant by using open-source services and APIs.

- Senior method. This method requires a complete development of a voice assistant from scratch and its further integration into your mobile app.

Junior method

Mobile AI app development is hard, and the junior method makes it easier. The Junior method is based on the integration of trusted technologies from the leading companies. To give you an example, we will use three most popular voice assistants like Siri, Google Now, and Cortana.

How to integrate Siri into a mobile app

If you have been considering voice assistant integration into your mobile app, you’ve probably noticed that Siri had been unavailable for third-party apps. But, everything changed in 2016, when iOS 10 was released at the WWDC and Siri integration can take place in a few arias, so you can use Siri if you’re planning to develop an app that falls under one of these categories:

- Audio and video calls

- Messaging and contacts

- Payments via Siri

- Photos search

- Workout Car booking

So how to make your own Siri?

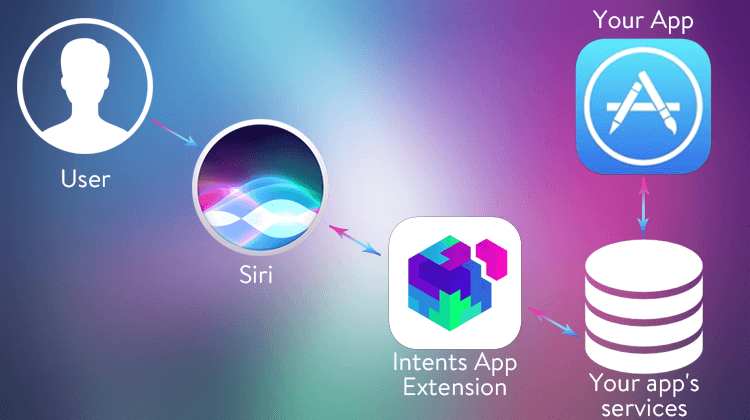

To integrate Siri into your app, you’ll need Apple’s SiriKit. The SDK has two types of mobile app extensions:

- An Intents app extension. Responsible for the actions in your mobile app. For instance, users might want to call someone, make a reminder, or book a flight.

- An Intents UI app extension. This one is responsible for controlling brand and custom content in the visual representation of user interface.

SiriKit defines intents as types of request that a user can make. To clarify the types of intents you might want to use in your app, related intents are grouped into domains. For example, if a user wants to make a reminder, the domain can contain an intent for creating a timer, opening and checking a calendar.

So this is how Siri-integrated mobile app works. A user sends a voice request, the system defines the intents’ characteristics and sends it to the Intents App Extension, it processes the received data, if needed, uses your app’s services and then shows the result to the user.

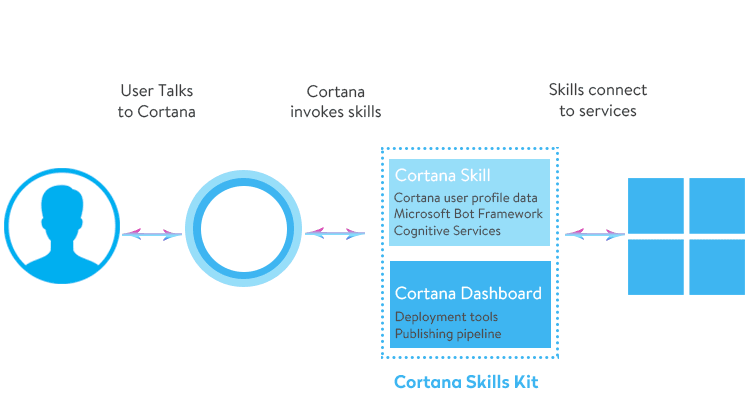

How to make a Cortana-based mobile application

Microsoft is happy to let you integrate Cortana voice assistant in your mobile app or desktop app. Users can even use voice control without directly calling Cortana. The Cortana Dev Center provides you with detailed information on the using Cortana’s skill in your mobile app and integrating the app name into a voice command.

You can integrate the app’s name into a voice command using these three ways:

- Prefixal. The name of the app stands before the command: ‘Fit life, start a workout’.

- Infixal. The name of the app stands in the middle of the voice command: ‘Start a workout Fit Life for me, please!’

- Suffixal. The name of the app stands at the end of a command: ‘Start a workout with Fit Life’.

There are two types of regimes you can activate in Cortana for your mobile app: the background for apps with simple commands, and the foreground for more complex commands that require specification of parameters.

The Google assistant integration for mobile apps

Well, Google has its perks for developers, for example, it doesn’t have a strict requirement for design and the approvement period in the Play market is reasonably shorter and easier than in the Apple Store.

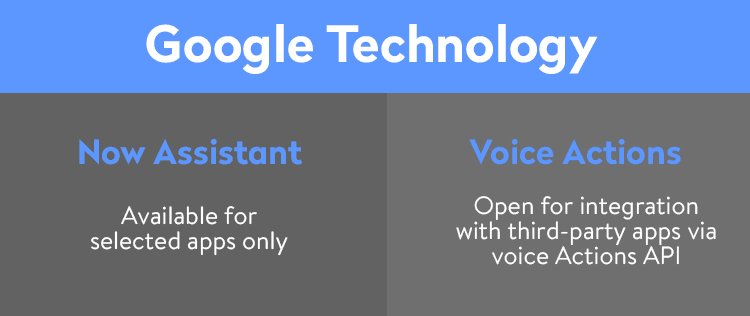

The thing is that for the voice assistant integration Google stays cautious too. Today only selected apps like eBay, Lyft, and Airbnb can use Google assistant. But keep your chin up! There is still a chance for you. Integrate a Now Assistant into your mobile app. Google Now is an artificial intelligence assistant that can not only understand requests and give an answer but also learn, analyze, and conclude.To integrate it into your mobile app, you’ll need to register the app with Google.

There is another Google technology that is open for integration with the third-party apps via Voice Actions API. Voice Actions is a narrowed concept of Google assistant that based on the speech recognition and information search.

Voice Actions API has a detailed guide for developers that teaches them how to integrate voice ai mechanism in mobile apps and even wearable apps.

Middle method

When building your artificial intelligence apps with voice assistant you might need external open source solutions. This method is suited for developers who are familiar with machine learning. How to make simple ai app? So here are some tools that come in handy when creating a voice assistant AI apps, mobile and web services are included:

- Melissa. This system has many different parts that can be modified without changing the main algorithm, so it is a perfect fit for the newbies in voice assistant development. Melissa speaks, takes notes, reads the news, uploads pictures, plays music, and works on OS X, Windows, and Linux.

- [Jasper.](https://jasperproject.github.io/ target=) This system is perfect for those who want to develop big parts of artificial intelligence in mobile apps without the exterior help. Jasper runs on Raspberry Pi’s Model B. It is able to ‘listen’ thanks to the active module and ‘learn’ with the help of the passive module. It studies your habits and runs all day long.

- Dialogflow. It supports voice recognition, voice-to-text technology, can perform relevant tasks, analyze and draw conclusions. Dialogflow has free and paid versions. The paid one works on a private cloud, so it is suited best for those who have privacy on the list of priorities.

- Wit.ai. Wit.ai is pretty similar to Dialogflow. It requires two components to be set up: intents (users request) and entities (intent’s characteristics). Wit.ai has a good long list of intents, so you don’t have to build it yourself. And it is completely free for private and public version.

Senior method

This method is for hardcore developers who have experience in developing machine learning applications from scratch. Independent voice activated app development can become easier if you use services that provide high-powered and efficient computing resources.

Google’s Tensorflow

TensorFlow is an open source software library for numerical computation using data flow graphs. Thanks to its flexible architecture, you can deploy computation to multiple CPUs or GPUs on a desktop, server, or mobile device with a single API.

So, how to develop AI voice assistant? You can create a mobile AI voice assistant with TensorFlow that is trained on specific data, such as SpeakEasy AI, which was a chatbot built on a neural model that was trained on millions of comments from Reddit.

TensorFlow was involved in the building of multiple Google’s products like speech recognition, Gmail, Google Photos, and Search.

Why use Tensorflow:

- Flexible

- Portable

- Auto-differentiation

Amazon Machine Learning

Amazon Machine Learning (AML) is a machine learning service that lets developers create complicated and intelligent machine learning apps. AML has the support for multiple data sources, lets developers create data source objects by using the data in Amazon Redshift and MySQL database, has the support for binary classification, multi-class classification, and regression models.

AML was made with simple, scalable, dynamic and flexible ML technology used by Amazon’s ‘Internal Scientists’ community professionals.

Azure ML Studio

Azure ML Studio is a Microsoft product that gives its users the opportunity to create and train models, which then can be made into APIs. One user’s account gets up to 10GB of storage for model data. For bigger models, it is possible to connect your own Azure storage to the service.

With Azure, you get a wide range of algorithms. If you want to just try it and experience the way it works, you can use the service anonymously without creating an account up to eight hours.

Azure ML Studio’s features:

- Natural language processing

- Recommendation engine

- Pattern recognition

- Computer vision

- Predictive modeling

5 tips for building artificial intelligent voice assistants

Choose the best platform

When thinking about how to create your own virtual assistant, research the existing platforms. Today there are all kinds of different platforms and services for creating an AI personal assistant. So, make the list of all the features you need and research existing platforms, then consider all ups and downs and choose the right one for your mobile application.

Keep the end user in mind

When developing a virtual assistant app, you should always think about the end users, who they are, how old they are, and what tasks they want your assistant to complete. With these questions in mind, develop the right voice tone, language, and tasks that your AI personal assistant apps can do to make the end user’s life easier.

Select useful features

When making a feature list for voice assistant apps, remember this: to build AI assistant that works is better than a feature-packed assistant that doesn’t perform one task perfectly. Choose the most necessary features and make your assistant perform them perfectly.

Always-on interface

For AI personal assistant app, an always-on interface saves time and limits unnecessary clicks. So the user can call a voice assistant any time without making any extra efforts.

Give it personality

In the end, it is the user who decides to continue to use your app or not, so the way to make them stay is to form a personal attachment to your app. Give your voice assistant a personality that your end users will like.

Conclusion

Artificial intelligence in mobile applications is a big trend today and everyone wants to take a bite of this delicious pie and a voice assistant is just one way of doing it. Now you know your strategy for building apps like Siri. But making a copy is easy. The tough part is figuring out how your voice assistant is going to stand out among others. Make it better with CodeTiburon.