Today machine learning and artificial intelligence are everywhere. These modern technology trends are taking the world by storm. Mobile application development is the niche that can also benefit from using machine learning. You might be wondering:

But what exactly is machine learning and how can I use it in my mobile app?

This article will make you a machine learning expert!

What is machine learning

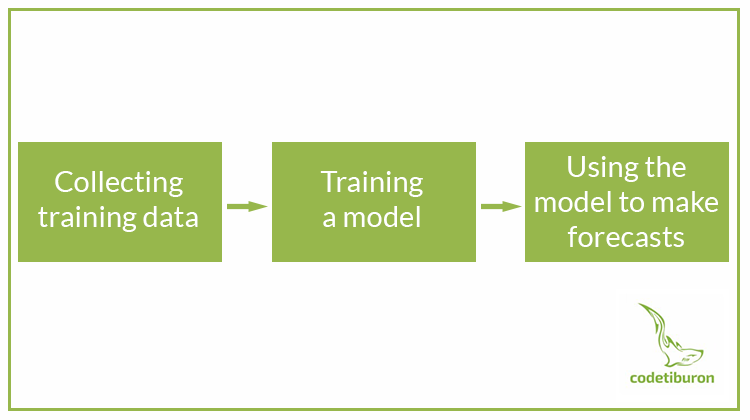

Machine learning is teaching computers to recognize patterns in the same way as human brains do. It is learning from examples and experience instead of hard-coded programming rules and using that learning to answer questions.

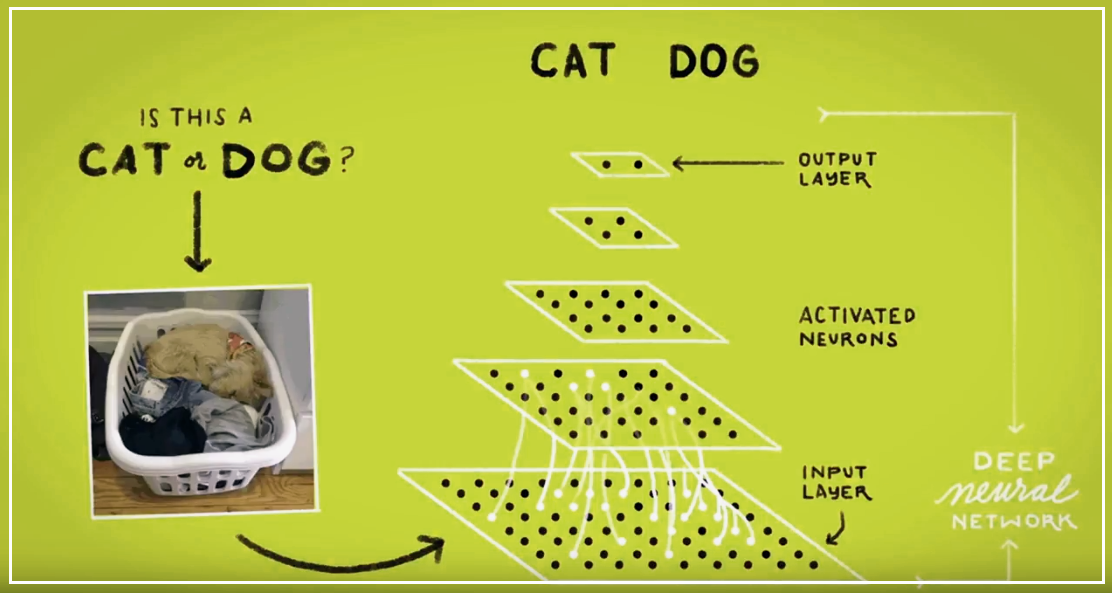

Cat or Dog?

For a child, this question is really simple, but for a machine, the route to the right answer can get quite challenging. This image, showing the problem of differentiating between a cat and a dog, is a great example of what deep neural networks are doing. All the data are going to the bottom layer through these neurons. Each of these holes (pixels) are going through a multiplier. When that multiplication yields a value above a certain threshold, it moves on to the next layer. And this algorithm repeats until it gets to the output layer, and then you’ll get your answer if it’s a cat or a dog. These multipliers are what is learned by the machine through training.

Why using machine learning for mobile apps is a great idea

The era of technology started a very long time ago, and to this day it keeps revolutionizing the world of software and customer experience. Today customers want every experience they have to be super-personalized. To achieve this, we need AI and machine learning.

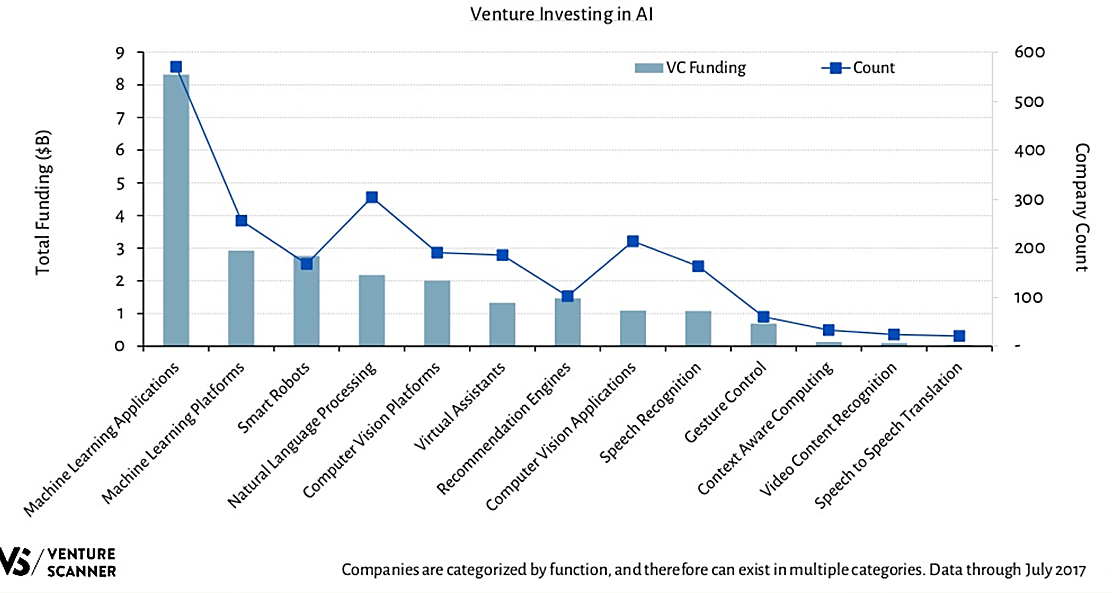

According to Venture Scanning reports, Machine Learning Applications is a leading category among funded startups, with over $8 billion in funding. These data clearly indicate that machine learning is equally beneficial to startup founders as well as companies’ executives.

Machine learning benefits for mobile apps:

- Relevant content or product

- Personalized communication with users

- Targeted ad helps make sales

- Fast and easy search

- Engaging shopping experience for e-commerce apps

- Better incentive to use the app on a daily basis

See how business giants use machine learning in their apps and rocking it:

- Starbucks: MyStarbucks Barista app lets users choose what they want and the app will make an order on their behalf, so they could pick it up at a certain store without the need of standing in a queue.

- Taco Bell: The TacBot can take orders, answers questions, and recommend menu items based on a user’s preferences.

- eBay and Amazon: the apps recommend items based on the previous and current user’s behavior.

How to enhance a mobile app with machine learning technology

Machine learning in data mining for mobile applications

Data mining is a process of predicting based on big data analysis. When you collect all the possible data about your users, you might need help differentiating and analyzing it. Machine learning can do it for you, it can even find all the possible variations and subtle customer behavior patterns that humans simply can not distinguish. So it will allow you to keep different groups of people interested in your app and provide them with valuable content.

Machine learning in mobile finance apps

Today mobile finance apps are playing a big role in customers’ lives. Machine learning can make your “smart bank” even smarter. ML can analyze big sets of data and modify itself to make a better user experience.

Machine learning can be implemented in finance apps in three ways:

- Predictions. Machine learning systems are able to analyze big amounts of data, including customers’ financial status, their behavior, market changes, upcoming trends, etc.

- Security. The most important issue for every user is the safety of their money. Intelligent analysis of all ongoing activities can protect customers from fraud and encourage them to use your app.

- Personal assistance. A Personal Assistant, like a chatbot, can answer any question your user might have without the need of calling information services.

Machine learning for e-commerce app

With Machine Learning, you can improve the customer experience of your application and make it more personalized. For example, Amazon’s suggestion system is based on Machine Learning algorithms in real time mode, while a user is browsing. It learns on the fly, as the user goes through different pages, and analyzes their likes and dislikes.

Now, here are some benefits of machine learning for e-commerce mobile apps:

- Improved product search. Apps with machine learning understand the text query better and show more relevant results.

- Product promotion and recommendation. Based on mobile app content analysis, customer behavior, and purchase patterns, machine learning makes your app recommendations and promotions more and more relevant with every visit.

- Fraud prevention. Machine learning can monitor ongoing processes in the app without your control and then detect and ban all suspicious activities. It can also resist already known threats and previously unidentified threats in real-time.

Machine learning in the healthcare mobile market

If you are an owner of a healthcare business and thinking of creating a mobile app, you should consider implementing machine learning into it. Machine learning applications can measure any number of your daily activities, like water intake or workout, use the data from thousands of people with diabetes to learn and provide them with relevant and valuable treatment. This kind of apps can show you if you drink water less than usual, or if sugar level could rise and place you at risk.

For example, IBM Watson with its database of numerous cases of cancer can sometimes diagnose a patient even better than professionals.

Machine learning for fitness trackers and mobile apps

Today fitness industry can’t be complete without a mobile app, which can sometimes be lacking deep insights enabling users to stretch their limits. With machine learning, you can turn your mobile app into a personal coach, because it customizes an app for personal needs, aims, and physical conditions of every single user.

For example, Optimize Fitness incorporates an available sensor and genetic data, for creating user experience that provides its users with tools to improve their physical form.

How Google uses machine learning in its apps

- Google Music. Creates recommendations based on what you’re already listening to.

- Ok, Google. Speech recognition.

- Google translate. Recognizes that a picture contains text and where it is in the picture. Translates it in real time.

- InBox. Creates smart replies as you would, based on the way you responded in the past.

- Image Search. Creates categories that you might be looking for.

- Google photos. Creates the categories you can search through. Search: cats. Shows all the pictures you have in the Google Photos.

Machine learning as an API

If mere thinking about programming a machine learning system sends shivers down your spine, Google has developed a few APIs that you can implement into your mobile app. Those APIs are pre-trained machine learning models. All you need to do is create a REST request for these APIs and get the result back.

Want to know the best part?

Today we’ll explain what the API’s can do and help you to figure out for yourself which one will be useful for your mobile app.

Cloud Vision

Complex image detection with a simple REST request.

Core features:

- Face detection. Tells you if there are faces in the image. If so, where they are, how many faces there are, and what emotions we can find in the image (whether they are happy, sad, angry, or surprised).

- OCR (Optical Character Recognition). Can identify text in an image, tell you what language the text is in, and where the text is located inside the image.

- Explicit content detection. Tells you if the image is appropriate or not. Gets useful when you’re dealing with user generated content. You probably don’t want to have someone doing manual classification of these images as appropriate or not.

- Landmark detection. Tells you if there is a landmark in the picture, and what are the longitude and the latitude coordinates of that landmark.

- Logo detection. Tells you if there’s a company logo present in the picture.

- Crop hints. Gives you suggested crop dimensions for your photos.

- Web annotations. Gives you some granular data on entities that are found in your image. It’ll also tell you all the other pages where the image exists on the Internet. So if you need to do copyright detection, it’ll give you the URL of the image and the URL of the page where the image is.

- Document text annotation. Improves the OCR model on large blocks of text. If you have images with lots of text, the model will extract the text from the image.

Who uses Cloud Vision:

Disney used the Vision API for a game promoting the movie “Pete’s Dragon”. And the way the game worked is that users were given a quest, so they had a clue and had to take a picture of that word (couch, computer, everyday objects). If they took the picture correctly, they would superimpose the image of the dragon on that object.

So Disney needed a way to verify that the user took a picture of the correct object that they were prompted to. And a vision API was a perfect fit to do that. They used the label detection feature, which basically tells you what it is a picture of, and they were able to verify images in the game that way.

Realtor.com (Real Estate Listing service) uses the Vision API in their mobile application that runs on OS and Android. People can go around as they are looking for houses and take pictures of a “for sale” sign. And they use the Vision API’s OCR (Optical Character Recognition) to read the text in the image and then pull up the relevant listing for that house.

Cloud Speech

This API transcribes speech in over 80 languages, supports streaming and non-streaming recognition, filters inappropriate content and lets developers integrate this functionality into their own applications.

Who uses Cloud Speech:

Azar is a chat application that uses The cloud Speech API and Cloud Translation API to translate audio between matches. So whenever the two people don’t speak the same language, they transcribe the audio with the speech API and then translate it into the other person’s mother tongue with the translation API.

Cloud Translation

With the Translation API, you can translate your text into over 100 languages. This API lets you integrate the functionality of Google Translate into your own applications.

Who uses Cloud Translation:

Airbnb uses Cloud Translation API. 60% of Airbnb bookings connect people who use the app in different languages. Using the Translation API to translate listings, reviews, and conversations significantly improves a guest’s likelihood to book.

Cloud Video Intelligence

Cloud video intelligence is the newest addition to the Cloud Machine Learning APIs suite. It can understand your video content at the shot frame or video level.

An API can identify different things in the video and sent you to the part of the video where you can see a chosen thing. Let’s say, you are looking for a video of a football game, but you have hundreds of videos, an API will find such videos for you and will let you skip directly to the scenes relevant to football.

Who uses Cloud Video Intelligence:

A company using Cloud Video Intelligence is Cantemo. They are a media asset management company. And all of their customers have lots of video content. Users upload their content to Cantemo’s platform. The company helps them transcode and edit it. They’re enabling their customers to make their video content searchable so they can search within their videos with the help of the video API.

Cloud Natural Language

This particular API performs sentiment analysis and entity recognition on the text, extracts entities, sentiment, and syntax from the text.

- Analyze entities: Antwerp is a city in Belgium. Can identify different entities like people, locations, organizations, events, etc. The JSON response gives you the following entity information: name, type, a Wikipedia URL and an id that maps to Google Knowledge Graph.

- Analyze sentiment: Antwerp is my favorite city. Tells you a score on a scale from negative 1 to 1, how positive or negative is this text, and a magnitude, which tells you regardless of being positive or negative, how strong is the sentiment in this text with the range from 0 to infinity, and it’s normalized based on the length of the text.

- Analyze syntax: Antwerp hosted the 1920 Summer Olympics. JSON response will give you a dependency parse tree, that tells you which words in a sentence depend on each other. Then you’ll get the parsed label, which will tell you what is the role of each word in the sentence (root, nn, det, nsubj, ccomp, dobj, p, etc). Then you’ll get a part of speech which tells if it’s a noun, a verb, or an adjective. Then you’ll get a Lemma – the canonical form of the word, it comes useful if you’re counting how many times a specific word or term is being used to describe your product. And you also get some additional morphology details on the text.

Who uses Cloud natural Language:

Wootrick, a customer feedback platform, uses this API. Wootrick’s job is to help their customers make sense of their open-ended feedback. An open-ended text feedback is really difficult for them to make sense of and that’s when the natural language API comes into play.

They are actually using all of the methods of the natural language API. So they are using sentiment analysis to identify if the numbered score that the person gave lineup with the open-ended feedback that they sent. They are also using entity and syntax analysis to pull up the key subjects and terms from the feedback, and then, if necessary, route those to the right person in near real time to respond to the feedback.

Conclusion

There are numerous ways to use machine learning in mobile apps. Choose the path that suits better for your business. Machine learning is here to revolutionize the modern mobile app development and customer experience. So, do not get left out, start planning the way to implement machine learning in your application today!